Teaching & Learning

Curriculum, assessment, professional learning, and AI literacy — practical guidance for the classroom.

These guiding statements set out the VINE School's position on key teaching and learning issues related to AI. They are intended as a starting point for school-level discussion, not as prescriptive rules.

Guiding Statements

1.1

Critical AI Literacy

The VINE School integrates Critical AI literacy into existing subject areas rather than teaching it as a standalone topic. Each faculty is responsible for identifying where AI intersects with their discipline and incorporating this into curriculum planning.

Critical AI literacy encompasses four domains, adapted from the OECD/EC framework: engaging with AI (understanding what it is and how it works), creating with AI (using AI technologies purposefully and ethically), managing AI (evaluating outputs, identifying bias, and understanding limitations), and designing with AI (contributing to how AI is developed and deployed).

In mathematics and numeracy, AI tools present distinct considerations: calculators and computational aids have long been part of the discipline, and schools should consider how generative AI extends or complicates existing norms around tool use in mathematical reasoning.

Critical AI literacy extends beyond "how to use AI" to encompass "when to use it, when not to, and why."

1.2

Academic Integrity

Academic integrity remains essential to all disciplines and must be clearly articulated to students. The VINE School's approach to academic integrity includes the responsible use of AI:

Academic integrity is based on trust, honesty, and respect. All sources must be acknowledged, including AI technologies. If AI has been used in any form for part or all of a task, the specific tools and the nature of their use must be disclosed.

1.3

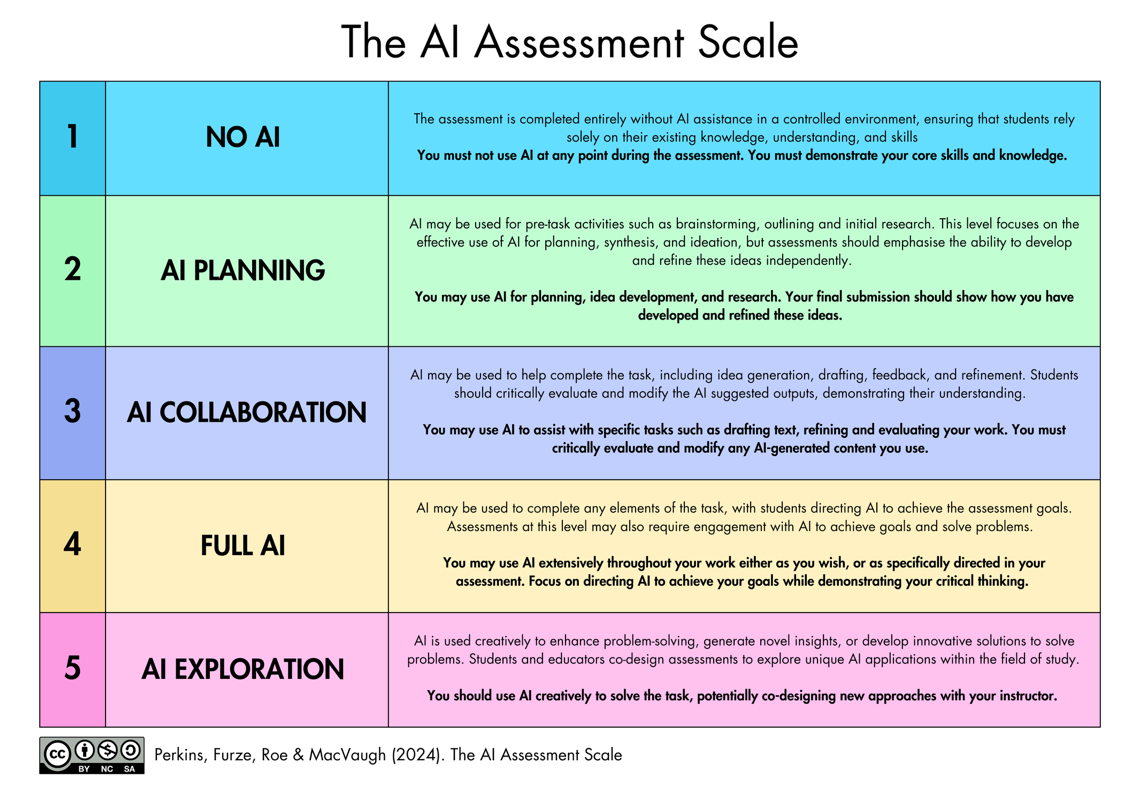

The AI Assessment Scale

The VINE School uses the AI Assessment Scale to provide a clear, shared framework for communicating the appropriate level of AI use in any given assessment task. The scale has five levels.

Different stages of an assessment task may require different levels of the scale. Any task or stage requiring Level 1 (No AI) must be completed in class under supervision.

1.4

Assessment Design

Assessment practices must be designed with AI in mind, not merely policed after the fact. The VINE School encourages assessment approaches that are resilient to AI misuse while remaining authentic and meaningful. These include:

- Process-based assessment (where the journey of learning is assessed, not just the final product)

- Oral defence and viva voce components

- Portfolio approaches that demonstrate growth over time

- Reflective journals documenting AI use and its impact on learning

- Collaborative tasks where individual contributions are visible

1.5

AI Detection

The VINE School does not approve the use of AI detection tools as the sole basis for academic integrity decisions. International evidence consistently demonstrates that detection tools produce unreliable results and are biased against multilingual learners and students for whom English is an additional language.

Schools seeking to address concerns about AI use in assessment should focus on assessment design and transparent expectations rather than detection.

1.6

Professional Learning

Staff require ongoing, embedded professional learning to engage with AI in their practice. The VINE School is committed to providing this as a school responsibility, not an individual one. Professional learning includes:

- AI considerations addressed within at least one whole-staff session per semester — whether as a dedicated AI focus or integrated within broader teaching and learning priorities

- Regular opportunities through communities of practice and peer collaboration

- Designated time for teachers to explore and evaluate AI technologies relevant to their discipline

An AI Lead is designated to coordinate professional learning, curate resources, and serve as a point of contact for AI-related questions across the school.

1.7

Cognitive Offloading

The VINE School recognises that AI use exists on a spectrum from genuine learning support to "cognitive offloading" — where students rely on AI instead of developing their own thinking. Teachers are supported to distinguish between AI use that scaffolds learning (operating within the student's zone of proximal development) and AI use that bypasses it.

1.8

Staff Use of AI for Reporting and Administration

The use of speech-to-text tools and GenAI for proofreading, editing, and refining the clarity of report comments is approved, provided that the substance of the feedback reflects the teacher's own professional judgement. AI should not be used to wholly generate student feedback or report comments.

Key Roles, Key Questions

The following questions reflect the concerns that different stakeholders bring to teaching and learning in an AI-rich environment.

| Role | Key Questions | Guidance |

|---|---|---|

| Head of Curriculum | What does current research suggest about the impact of GenAI on critical and creative thinking? How do we redesign assessment without losing rigour? | 1.1, 1.3, 1.4, 1.7 |

| Teachers | What can I actually use AI for in my classroom? How do I set clear, consistent expectations for students? | 1.1, 1.2, 1.3 |

| Students | Can I use AI in class? Is it cheating? What exactly do I need to acknowledge, and how? | 1.2, 1.3 |

| Parents | Is my child learning, or is the AI learning for them? How would I know the difference? | 1.1, 1.7 |

| Principal | Are our assessment practices defensible? Are we keeping pace with comparable schools? | 1.3, 1.4, 1.6 |

| Board Directors | Are we treating AI governance with the same rigour as other high-risk compliance areas? | 1.2, 1.3, 1.4 |